Incorporating infrastructure as code testing into the GitOps cycle can help DevOps teams and organizations improve automation and throughput without sacrificing quality and uptime.

● How can organizations reduce the time it takes to review configuration changes and still deploy safely?

● How can organizations ensure they are still in control of security, infrastructure, and resilience requirements ?

One of the main drivers to help Engineering teams move faster is GitOps, a way of implementing Continuous Deployment for cloud native applications. It focuses on a developer-centric approach when operating infrastructure, by using tools that developers are already familiar with, including Git and Continuous Deployment.

By managing Infrastructure as Code, (IaC) with tools such as terraform, organizations are able to use the same methods used for our application code.

IaC enables Ops teams to test and review proposed configuration changes before and after they are deployed to production and have a single source of truth for the entire environment.

Unit Testing

Static Code Analysis

Continuous Simulation

Post-deployment Validations and Testing

Unit tests are typically automated tests written and run by developers to ensure that a section of a code (known as the "unit") meets its design and behaves as it’s intended to.

As an example, let’s look at a terraform code that should create a VM. In this case, it really does create a VM and does not fail. These tests are usually done in Dev environments For reference, terratest is an awesome tool for terraform unit testing.

Static Code Analysis (also known as SourceCode Analysis) is performed as part of a Code Review. Static Code Analysis commonly refers to the running of Static Code Analysis tools that attempt to highlight possible vulnerabilities within “static” (non-running).

By using this method, organizations can enforce guardrails and detect security issues and any drifts from common best practices.

Some example of this:

● Ensure https is used on ingress.

● Volumes should be encrypted.

● Every resource must have a tag.

● Security Groups must be attached to EC2.

Tools:

● Datree

● Bridgecrew

● Snyk

Open Source

● OPA (Open Policy Agent)

● tflint

● terrafirma

● tfsec

● checkov

It enables you to simulate all possible dependencies to predict all possible outcomes, before deploying new configurations to production.

Once a PR is simulated, you can easily review the impact of each code line on your production environment's overall state, and avoid failures such as service interruptions, data loss, redundancy issues, and compliance breaches.

On top of the above, at this phase, you can enforce architectural and security standards which are at the system level, and not resource level, as we do in Static Code Analysis. Simulations enable teams to predict impact even in the most complex scenarios such as multi-cloud deployments, and across infrastructure stack layers--because they don't always play nicely together.

Continuous simulation allows you to fully automate your pipeline, and it eliminates the time-consuming code review between the parties by providing a full image of what production will look like once the change is applied.

Humans can’t see and assess the countless risks caused by all the dependencies in new configuration deployments. The more dependencies there are, the more that can go wrong. Thus, the higher the cost will be when business critical operations fail, customer service or payments stop, and time-to-market slows.

By using Simulation you can improve the resilience of configuration changes and reduce the time it takes to review infrastructure configuration by up to 90%.

For the simulation to work, it collects data from the real-time production state, and once a PR comes along, it Is inserted into the same mathematical model so that the future state can be predicted and tested.

Tools:

● Lightlytics

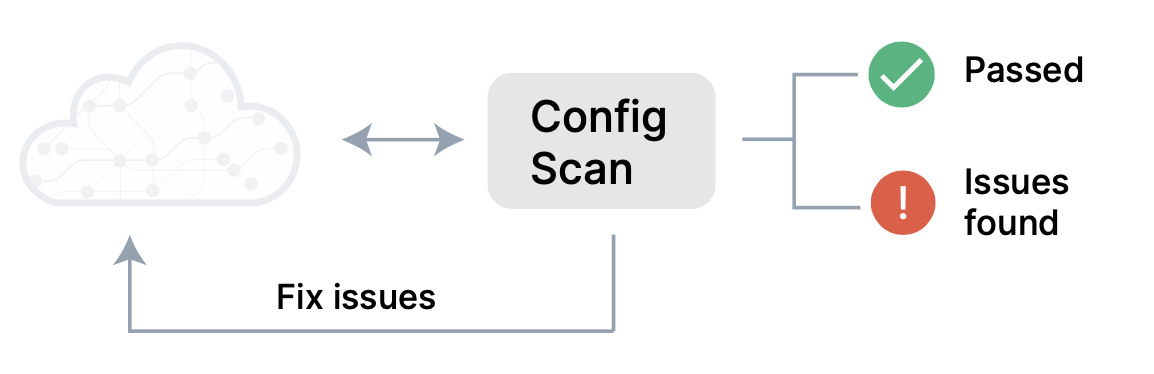

With post-deployment validation and testing, production configurations are validated against pre-defined rules and policies.

Tools:

● AWS security hub

One method of post-development validation is Chaos engineering, the discipline of experimenting on a software system in production. This is done in order to build confidence in the system's capability to withstand turbulent and unexpected conditions by injecting errors to validate infrastructure and application behavior. At a high level, chaos testing is simply creating the capability to continuously, but randomly, cause failures in production environments. This practice is meant to test the resiliency of the systems, as well as to determine MTTR.

Tools:

● ChaosIQ

● Gremlin

Open Source

● chaostoolkit

● chaosmonkey

Ensuring the needed gates and guardrails are in place to deploy Infrastructure code faster and with greater safety must become a standard practice. To learn more about Continuous Simulation and how it can help your team deploy each configuration change with total confidence, contact us.

If your team has been wondering how to empower your engineers while reducing risk, we’ll show you how easy it can be to make that a reality and explain more about our platform.

Stream Security is an AI Detection & Response (AI DR) company built for the era of AI-driven environments across cloud, on-prem, and SaaS. As AI agents operate with real permissions and attackers move at machine speed, Stream enables security teams to keep pace by continuously computing a real-time, deterministic model of their entire environment. Powered by its CloudTwin® technology, Stream instantly understands the full impact of every action across identities, permissions, networks, and resources, allowing organizations to detect, prioritize, and safely respond to threats before they propagate. This transforms security from reactive detection into a true control plane for modern infrastructure.